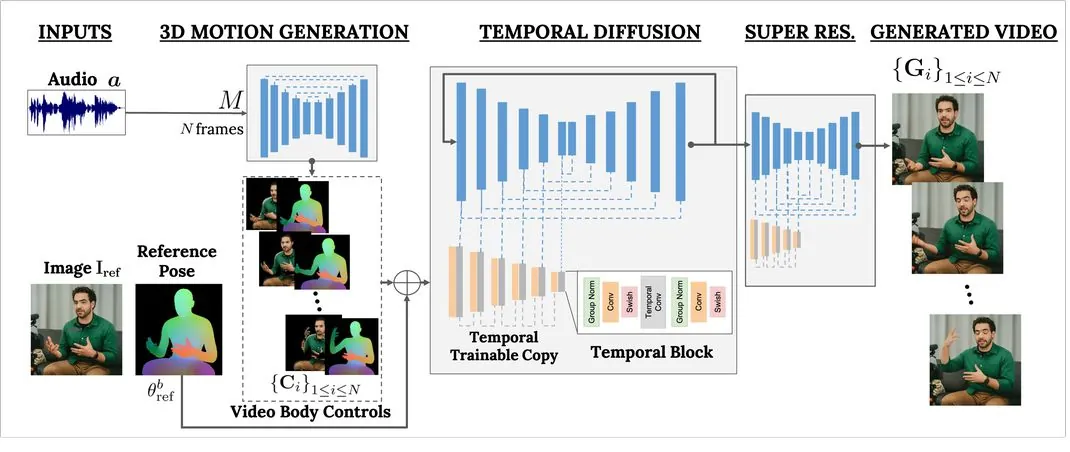

Google recently published a blog post on its GitHub page, introducing the VLOGGER AI model. Users only need to enter a portrait photo and an audio content. The model can make these characters “move” and have facial expressions. Read the audio content aloud.

VLOGGER AI is a multi-modal Diffusion model suitable for virtual portraits. It is trained using the MENTOR database, which contains more than 800,000 portraits and more than 2,200 hours of videos, allowing VLOGGER to generate images of different races and ages. , portrait videos in different clothes and postures.

The researchers said: “Compared with previous multi-modal models, the advantage of VLOGGER is that it does not need to be trained on each person, does not rely on face detection and cropping, and can generate complete images (not just faces or lips) , and takes into account a wide range of scenarios (such as visible torsos or different subject identities) that are crucial for the correct synthesis of communicative humans”.

Google sees VLOGGER as a step toward a “universal chatbot,” where AI can interact with humans in a natural way through voice, gestures, and eye contact.

The application scenarios of VLOGGER also include reporting, educational fields and narration. It can also edit existing videos. If you are not satisfied with the expressions in the video, you can make adjustments.

For more such interesting article like this, app/softwares, games, Gadget Reviews, comparisons, troubleshooting guides, listicles, and tips & tricks related to Windows, Android, iOS, and macOS, follow us on Google News, Facebook, Instagram, Twitter, YouTube, and Pinterest.